Introduction

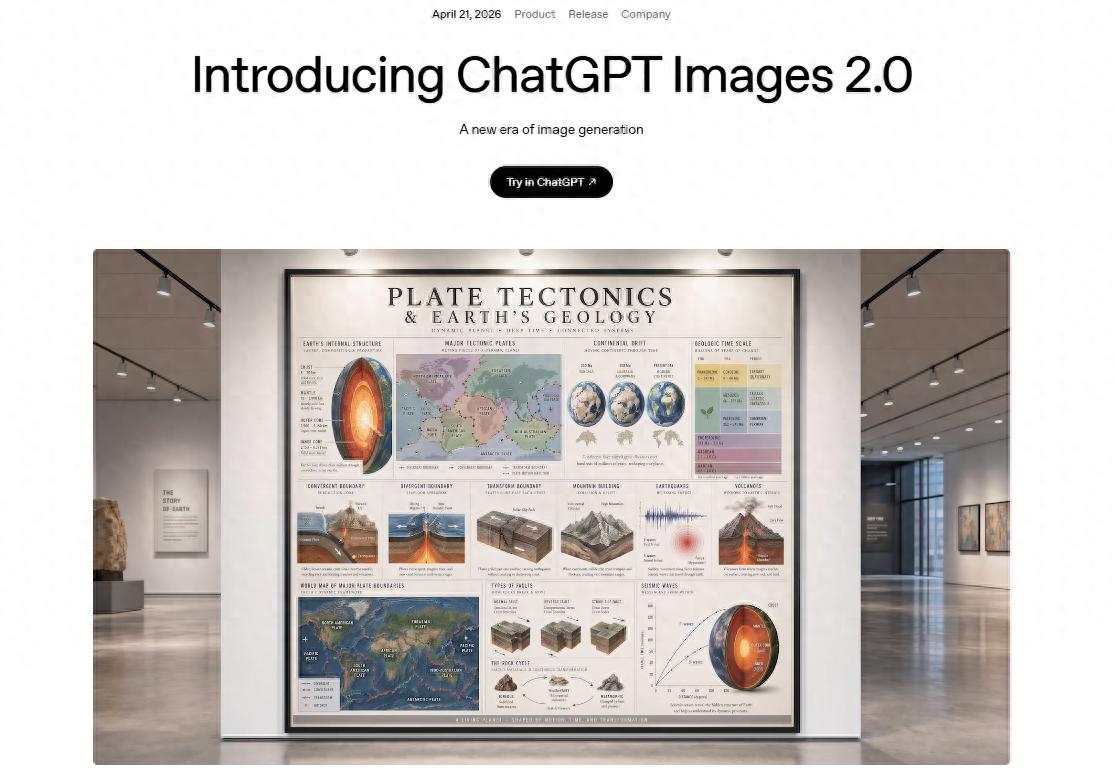

On April 21, local time, OpenAI officially launched the ChatGPT Images 2.0 model, the latest upgrade to its image generation capabilities within the ChatGPT platform.

This model aims to enhance adherence to image generation instructions, detail fidelity, and text rendering quality, showing significant improvements, especially in handling textual elements within images.

ChatGPT Images 2.0 focuses on text processing capabilities.

ChatGPT Images 2.0 focuses on text processing capabilities.

According to OpenAI’s official release, this updated model provides basic access for all ChatGPT users, with observations indicating that it can generate approximately five images per day. Paid users can utilize an enhanced “image thinking” mode, which integrates reasoning capabilities, multi-output generation, and web search tools.

Both OpenAI and user evaluations agree that the most significant improvement of ChatGPT Images 2.0 lies in the quality of text generation within images. For a long time, diffusion models have faced challenges in handling small-sized text, as the pixel area occupied by text is minimal compared to the entire image. Consequently, models often prioritize reconstructing larger areas, leading to spelling errors or unnatural fonts.

OpenAI states that Images 2.0 achieves “unprecedented specificity and fidelity,” effectively conceptualizing complex images and faithfully presenting user-specified details, including small text, icons, user interface elements, dense compositions, and subtle stylistic constraints, with output resolutions up to 2K.

Tech media Tech Crunch’s actual testing corroborated this advancement. The platform used prompts to generate a menu for a Mexican restaurant, resulting in dish names and prices that were reasonably accurate, making it suitable for use in a real restaurant, difficult to distinguish as AI-generated. In contrast, a similar menu generated two years ago using another model contained “multiple obvious spelling errors.”

Generating a stylized menu with clear, non-clinging fonts, image from TechCrunch.

Generating a stylized menu with clear, non-clinging fonts, image from TechCrunch.

In addition to English text, the model has also improved its handling of non-Latin scripts, supporting accurate rendering in various languages, including Chinese. This makes it more practical for generating images that include multilingual elements.

For instance, the Observer used the free generation feature with simple instructions to create a promotional poster for its membership service, “Observer.” The poster displayed clear Chinese characters with minimal instances of the stroke-clinging issues seen in previous AI-generated images, and the layout was reasonable, achieving a high level of completion, significantly more user-friendly compared to earlier image models.

The ChatGPT-generated “Observer” poster, with discrepancies in the text removed, achieves a high level of overall design completion.

The ChatGPT-generated “Observer” poster, with discrepancies in the text removed, achieves a high level of overall design completion.

On the other hand, the image thinking mode also introduces reasoning capabilities, allowing the model to perform web searches for the latest information and conduct self-checks to optimize output. These capabilities mean that the image generation speed is not as fast as directly conversing with ChatGPT, but during tests involving complex content like multi-panel comics, the model still only took a few minutes to generate.

It is important to note that in the field of AI image generation, diffusion models and autoregressive models are two mainstream technical routes. Currently, cutting-edge models often combine both, but OpenAI has not explained which underlying architecture this model belongs to. However, as OpenAI pushes the advancement of image generation technology, it will inevitably increase the difficulty for humans to recognize AI-generated content, raising concerns about misleading content.

U.S. financial media Business Insider believes that such models, capable of generating realistic images, can easily be used to create misleading pictures or forged photos. While the model’s “thinking” mode connects to web searches, aiding in fact-checking, it relies on a database up to December 2025, which could amplify the timeliness risks of the generated content over time.

As seen in the generated “Observer” poster, discrepancies in text content and actual rights raise concerns that if AI autonomously generates text for news images, product promotions, or social media content without clear AI generation markings, it could lead to the spread of misinformation.

Historical experience shows that similar model tools have been used by wrongdoers to create deepfake content, making platform responsibility and user self-discipline equally important. However, OpenAI has yet to disclose specific new safety mechanisms for Images 2.0. Additionally, OpenAI has not revealed the sources of training data, which could lead to copyright disputes if the model generates images highly similar to existing human works.

Nevertheless, setting aside these risks, from a technology-for-good perspective, ChatGPT Images 2.0 represents a pragmatic iterative upgrade. Its improvements in text rendering, instruction adherence, and complex composition bring AI image generation closer to practical daily use, rather than remaining merely a conceptual demonstration. Simple test results indicate that the model can already produce usable outcomes in basic commercial scenarios, marking a breakthrough from the technical bottlenecks of the past two years.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.