On April 16, 2026, Anthropic officially released Claude Opus 4.7, just over two months after the launch of Opus 4.6.

Following a flurry of product and model updates, Anthropic’s release of a new model feels like a major move. Many reports have labeled Opus 4.7 as the “strongest model,” with alarmist claims of “humans are done” and “job loss warnings” circulating widely.

However, it’s essential to examine what Anthropic has actually communicated.

The tone of this release is quite unusual. Anthropic stated directly in the announcement that the capabilities of Opus 4.7 are inferior to those of Claude Mythos Preview, which is only available to select partners like Apple, Google, Microsoft, and Nvidia, leaving regular developers and users without access.

Moreover, it is noteworthy that Opus 4.7 is not just weaker than the mythical Mythos; it also falls short of its predecessor in key areas. A striking figure in its performance metrics shows that the long-context benchmark MRCR v2 @1M dropped from 78.3% in Opus 4.6 to 32.2% in Opus 4.7, a staggering decline of 46 percentage points.

Such a significant reduction in flagship model capabilities is rare, and it is a deliberate choice by Anthropic.

Thus, while many continue to uncritically praise every new model as the “strongest,” they may be out of sync with Anthropic’s own trajectory.

Opus 4.7 is not a release aimed at becoming the “strongest model”; it represents a strategic choice with clear trade-offs, differing from the typical release strategies of leading model manufacturers. Anthropic seems to be aligning more closely with the release strategies of companies like Apple and Microsoft, which are in the mature commercialization phase of their products.

This may be the true significance of 4.7.

1. Programming Capabilities: Real Improvements Behind the Numbers

To better understand these changes, it is best to look closely at what Opus 4.7 has actually released.

Official Announcement:

Anthropic Official Announcement

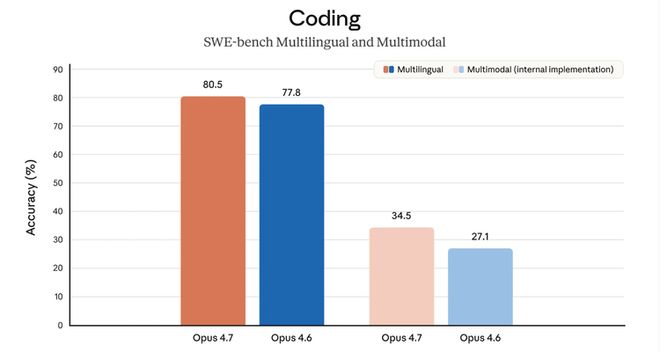

Programming performance is the focal point of this release.

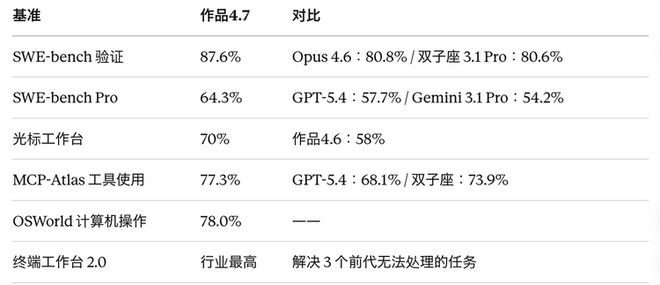

SWE-bench Verified (500 real GitHub issues requiring the model to write passing patches) improved from 80.8% in Opus 4.6 to 87.6% in Opus 4.7, a nearly 7 percentage point increase, making it the top performer among publicly available models. In comparison, Gemini 3.1 Pro stands at 80.6%, showing a clear gap.

SWE-bench Pro, a more challenging version covering complete engineering pipelines across four programming languages, saw Opus 4.7 rise from 53.4% to 64.3%, an 11 percentage point jump. Compared to GPT-5.4’s 57.7% and Gemini 3.1 Pro’s 54.2%, Opus 4.7 clearly leads in this benchmark.

CursorBench, a practical benchmark from Cursor measuring the model’s programming assistance quality in real IDE environments, improved from 58% in Opus 4.6 to 70% in Opus 4.7, a 12 percentage point increase. Michael Truell, co-founder of Cursor, stated, “This is a meaningful leap in capability, with stronger creative reasoning when solving problems.”

Partner testing data points to a clear direction: Opus 4.7 shows significant improvements in complex programming tasks that require long periods and cross-file context coherence. This was a major complaint from Opus 4.6 users over the past two months, where tasks would be abandoned midway or the model would get lost in multi-file bugs.

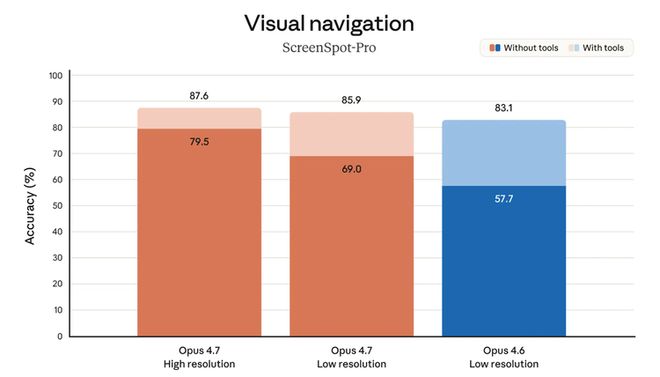

2. Visual Capabilities: The Most Underestimated Improvement

The visual accuracy benchmark XBOW jumped from 54.5% to 98.5%. This is not a gradual improvement; it represents a leap of reconstruction level.

Specific Specification Changes:

What practical impacts does this have?

For computer use product teams, this upgrade could be decisive. During the Opus 4.6 era, computer use was in a state where it could “do demos but not go into production” due to high error rates and unpredictability. A visual accuracy of 98.5% means this feature now meets the threshold for reliable deployment. Several tech blogs noted that if you had shelved computer use product plans due to high error rates in Opus 4.6, 4.7 has cleared this obstacle.

Feedback from Reddit (r/ClaudeAI) indicated that users found the improvement in visual capabilities crucial, with one user mentioning, “The enhancement in visual capability is key. I previously worked on many edge projects trying to iterate improvements in output through visual feedback loops, and the results were chaotic. I’m eager to see how 4.7 handles this issue.”

In addition to computer use, other benefited scenarios include document scanning and analysis (capable of reading smaller fonts and recognizing finer details in charts), screenshot understanding, dashboard applications, and complex PDF processing.

It is important to note the cost implications: higher resolution images will consume more tokens. If your application does not require high image detail, it is advisable to downsample before inputting.

3. The Biggest Regression: Long Context Performance Plummets

MRCR v2 @1M (million token long-context memory test) saw a dramatic drop of 46 percentage points, falling from nearly 80% to one-third.

This level of decline is almost unprecedented in flagship model iterations. MRCR v2 was heavily promoted by Anthropic during the Opus 4.6 era, with the original statement being that a “qualitative change occurred in the amount of context a model can actually use.” By 4.7, this “qualitative change” has vanished.

Why did this happen? The tokenizer changed.

Opus 4.7 uses a new tokenizer, which generates approximately 1.0-1.35 times the number of tokens for the same input text, with the specific multiplier varying by content type.

The direct chain reaction is:

Anthropic’s official statement claims the new tokenizer “improves text processing efficiency,” but benchmark data shows a clear regression in long-context scenarios.

Search capabilities have also regressed:

Searching and long text are precisely the scenarios most frequently used by many enterprise users.

Feedback from developers on Hacker News (post with 275 upvotes and 215 comments) reflects these issues reported by actual users. However, this is also a choice made by Anthropic.

4. New Behavioral Features: Self-Verification and More Literal Instruction Following

A noteworthy statement in the Opus 4.7 official announcement is that the model will verify its outputs before reporting results.

The technical team at Hex provided a specific example during testing: when data is missing, Opus 4.7 will accurately report “data does not exist” rather than providing a seemingly reasonable but fabricated answer, which was a pitfall for Opus 4.6. The fintech platform Block commented, “It can identify its logical errors during the planning stage, accelerating execution speed, showing a clear improvement over previous Claude models.”

However, self-verification has brought about another behavioral change: Opus 4.7 interprets instructions more literally.

This presents a significant migration risk. If you have finely tuned prompts for Opus 4.6, 4.7 may not interpret the nuances as well, instead executing strictly according to your written instructions. Anthropic has explicitly mentioned this in the official migration guide, recommending regression testing of key prompts before deploying 4.7.

A practical reference figure from Hex’s CTO indicates that the low effort tier of Opus 4.7 performs approximately at the level of the medium effort tier of Opus 4.6.

5. Reasoning Control Mechanisms: xhigh, Task Budgets, and /ultrareview

In Opus 4.6, a decision that impacted user trust occurred: on February 9, the switch to adaptive thinking default mode was made, and on March 3, the default reasoning depth for Claude Code was adjusted from the highest tier to medium, justified as “balancing intelligence, latency, and cost.” This was labeled by users as the “dumbing down gate,” with a senior director from AMD’s GitHub post being widely shared.

Opus 4.7 responds by making reasoning depth control more explicit for users.

xhigh Effort Tier:

A new reasoning intensity level added between the original high and max tiers. Claude Code has now updated all planned default tiers to xhigh.

However, the developer community has a direct question about xhigh, with a Reddit user stating, “Opus 4.6 defaulted to medium, and 4.7 defaults to xhigh. I want to know the reasoning behind this decision, as increasing the effort tier will obviously lead to higher token consumption.”

In other words, users see this as a “returning control to users” fix, but the reality is that the default tier has been raised, meaning the same tasks will consume more tokens. Combined with the tokenizer change, this results in a double increase in costs.

Task Budgets (in public beta):

A token budget control mechanism for long tasks. Developers set a total token budget (minimum 20K), and the model can see the remaining budget in real-time during execution, allocating resources accordingly to avoid stopping midway due to token overages and preventing unnecessary computational waste.

Claude Code has added the /ultrareview command: a specialized code review session focused on bug detection and design issues, with Pro and Max users receiving three free uses per month.

The auto mode is now available to Max users: previously only available in the Enterprise plan, Max users can now use it. In auto mode, Claude can make decisions independently, reducing the number of times it needs to ask users midway. Boris Cherny, head of the Claude Code team, stated, “Give Claude a task, let it run, and come back with verified results.”

6. Performance Overview: Wins and Losses

Here are the main benchmark data currently available (source: Anthropic official system card and partner evaluations).

Programming and Engineering (Opus 4.7 leads)

Visual and Multimodal (Opus 4.7 significantly leads)

Knowledge Work (Opus 4.7 leads)

Comprehensive Evaluation (Opus 4.7 shows clear improvement)

General Reasoning (three models roughly equal)

This benchmark has reached saturation and is no longer an effective competitive dividing line.

Research Tasks (GPT-5.4 leads, Opus 4.7 regresses)

Long Context (Opus 4.7 significantly regresses)

Summary of Selection Logic:

Opus 4.7 has clear advantages in programming, engineering agents, visual tasks, and financial/legal knowledge work; GPT-5.4 is stronger in research-intensive tasks and open web retrieval; in long-context scenarios, Opus 4.7 is significantly worse than its predecessor, which is the most concerning point.

7. Safety Measures: The Foundation for Mythos

This section is often overlooked as a “routine safety statement” in release notes, but it is key to understanding Anthropic’s current strategy.

On April 7, Anthropic announced Project Glasswing: opening Claude Mythos Preview to nine partners including Apple, Google, Microsoft, Nvidia, Amazon, Cisco, CrowdStrike, JPMorgan Chase, and Broadcom, specifically for defensive cybersecurity scenarios.

Mythos is Anthropic’s most capable model to date, reportedly able to autonomously discover zero-day vulnerabilities and has identified thousands of previously unknown vulnerabilities in major operating systems and browsers. However, due to this capability, it has been deemed to carry significant abuse risks, hence it is not publicly released.

Opus 4.7 serves as the first testing sample along this line. During the training phase, Anthropic deliberately reduced the model’s cybersecurity attack capabilities while attempting to retain defensive abilities, and implemented a real-time barrier system to automatically detect and intercept high-risk cybersecurity requests. The original announcement stated, “We will learn from the actual deployment of Opus 4.7 to determine whether this barrier is effective before deciding whether to promote it to Mythos-level models.”

In other words, every developer using Opus 4.7 is helping Anthropic calibrate the boundaries of safety barriers.

Gizmodo commented that this release employs a “bold marketing strategy—actively promoting its new model as ’less capable than other options,’” which is extremely rare in flagship releases.

Safety practitioners needing to use Opus 4.7 for legitimate penetration testing, vulnerability research, or red team testing must apply to join the Cyber Verification Program.

8. Pricing and Migration: Nominally Unchanged, Actually Increased

Pricing:

Input $5/million tokens, output $25/million tokens, the same as Opus 4.6. The API model ID is claude-opus-4-7. Available platforms include Claude API, Amazon Bedrock, Google Cloud Vertex AI, Microsoft Foundry, and GitHub Copilot has also been synchronized.

However, as mentioned earlier, the tokenizer change leads to approximately 1.0-1.35 times the number of tokens generated for the same input, combined with higher default effort tiers leading to more tokens consumed for long task agent workflows, the actual costs could be 2-3 times those of Opus 4.6 under the same settings.

Anthropic has also reduced the cache TTL for Claude Code from one hour to five minutes—meaning if you leave your computer for more than five minutes and return, the context cache will expire, requiring a reload, which will consume tokens faster. Many users in the Reddit community have already complained that “the quota burns through tokens like a waterfall.”

Destructive Changes List for Existing Opus 4.6 Users:

Practical Advice:

Anthropic’s official migration guide recommends running Opus 4.7 with representative production traffic before making a decision based on token consumption and task quality comparisons.

Opus 4.7 represents a targeted upgrade with clear costs. All these changes are designed by Anthropic, and to a large extent, you must pay for them.

The progress of this model includes:

- Improvements in programming and visual capabilities.

The downsides include:

- Significant regressions in long context and search capabilities.

- Nominally unchanged pricing, but actual costs are rising.

Anthropic is using Opus 4.7 to balance its approach—repairing the trust damage left by Opus 4.6 while also conducting real-world trials of safety barriers for broader future releases of Mythos-level models. More importantly, it aims to leverage its current leading position, transforming user affinity for its products into a kind of dependency, akin to the love-hate user loyalty seen in mature companies like Apple, and establishing a truly commercially valuable ecosystem.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.